How To Conduct Awareness Briefings

Transcript for the video blog:

I'm going to put a visual up, so you can see…

There's our logo, that's our website at www.click-360.com and that's my email address:

cpn@click-360.com and I invite you to get in touch directly if you want to leave a comment or raise a question.

This video is quite a short one, I just wanted to put together some information that has been prompted by the research that we're currently undertaking with organizations that use 360-degree feedback. We're trying to work out:

- how they use it

- what are the results they're getting, what's working well, what’s not working so well, etc.

We're going to publish that as a paper within the next few weeks. That research follows on from the research we did a few years ago and you can get a copy of that paper from our website (insert link). But for the main purpose of this presentation, I wanted to pick on something that we are finding…I mean, the research isn't over, we're still doing it…but this is an early finding and I wanted to share it with you right away.

Really what we're talking about here is how organizations introduce their people to 360-degree feedback, whether or not this is the first time they've done it. Most of the research Is telling us that organizations are relying upon the automated emails that come out from

whichever provider is giving them the tool - giving them the questionnaire - and that's fine.

And what we've discovered is that the introduction comes in the form of a quite detailed email, almost like joining instructions for a program, but there is no substitute in our experience for what we call the awareness briefing.

So I'm going to bring that up now and talk it through. What we're talking about is a video meeting so it's like this, it's a Zoom session or it's a Microsoft Team session or equivalent but the idea is to run the participants through a briefing (participant is the name

we give to the person that's getting the 360, so in other words, the person in the middle)

and then everybody else that's providing feedback to the participants - we call those raters. We're not making this presentation to raters, we recommend that you just do it with the participants. There's a little piece of the end I'll talk about which is where the participant gets involved with the raters.

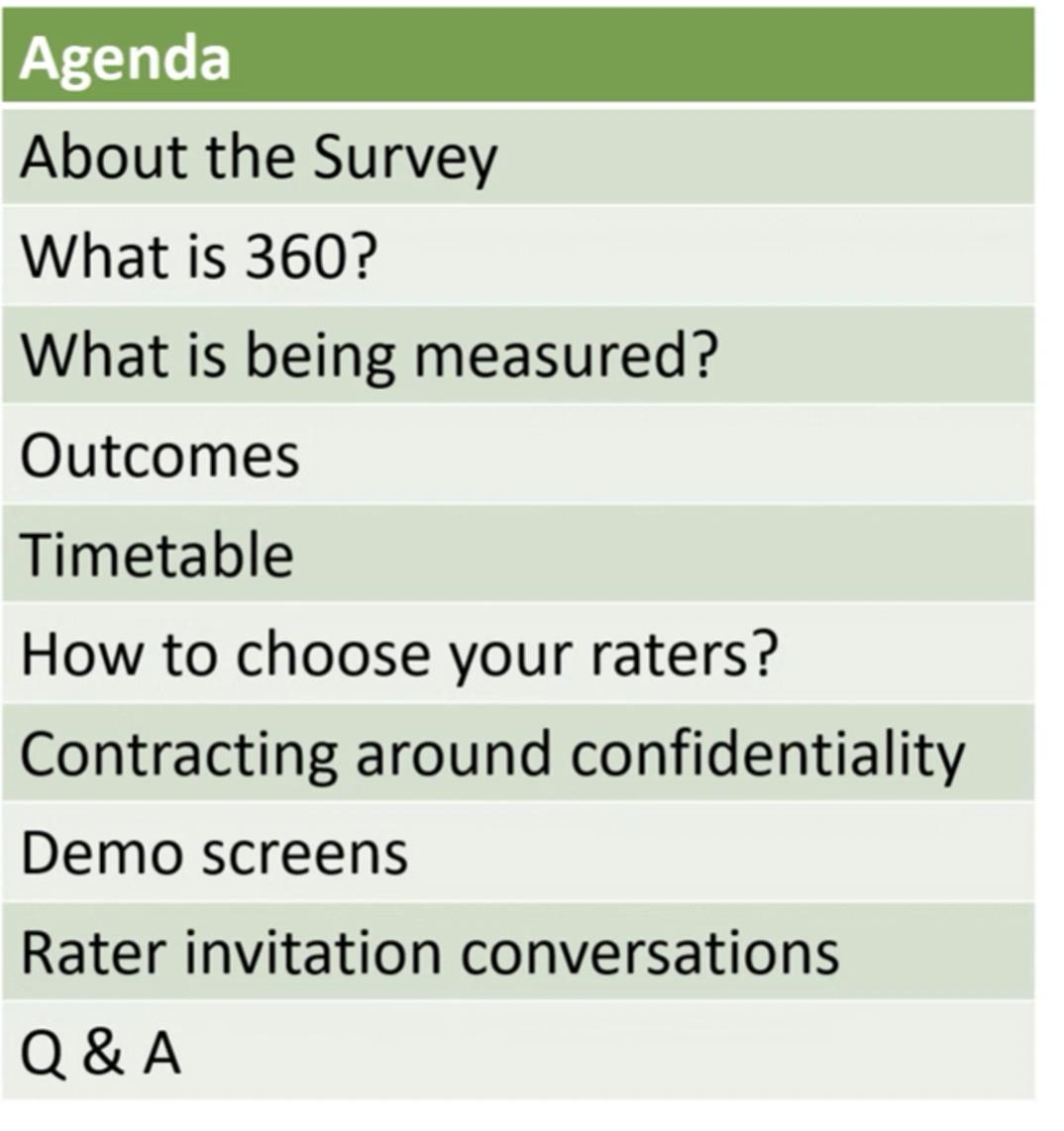

Let me explain through the slide that's on the screen. So, the agenda…

About the Survey

First of all, we like to give a little bit of background about the survey itself: why is the organization doing this? Why they're doing it now? There are usually some people in the room that have done 360 and others that haven't.

What is 360?

So, we'll spend a bit of time talking about 360 and, by the way, it’s very important to understand the receptivity level of the people in the room, because if someone has done a 360 and it didn't go so well, there may be some anxiety or concern about engaging with this new program. So we do talk about that. We are trying to alleviate any fears, concerns, worries. We want those to be left on the doorstep and we want people to be able to fully engage and participate in the program.

What is being measured?

So, what is being measured? We mean, what is the questionnaire that is being used?

It can be one of our standard questionnaires, off-the-shelf questionnaires (we have questionnaires for leadership, management, coaching and various others. We even have one for EI - emotional intelligence).

We've just introduced a questionnaire that is being promoted by the global pandemic.

The fact that people are working from home requires a slightly different set of skills, set of behaviours.

As well, some organizations are building their own questionnaires, and that's great. You know, we encourage bespoke questionnaires because then you're measuring things

that people are familiar with. By the way, if you’ve just introduced a new set of behaviours or a behavioural framework, leadership standard, values or competency framework, 360 programs are going to be a major way of embedding that across the organization.

Outcomes

Anyway, let me come back. This is the agenda - the outcomes, what's going to happen to the participant. Remember we're talking to participants. So what are they going to get?

They're going to get an individual report. They're going to get (hopefully) some help in terms of interpreting the report and planning actions, development actions, arising from the data in the report. And hopefully, out of that, they'll be given resources to develop whether that be coaching, training, materials that they can use, reading, videos, whatever. But that's important to talk about.

Timetable

Also the timetable, you know, because it’s very important to make sure this fits with the expectations of the participants. It's quite interesting about the timing of 360s anyway…I mean I find that some organizations get the timing a bit wrong, they bring it in too close to the performance management process, the performance appraisal, and you know we've always tried to separate it from that. We recommend that this is about development. 360 is about development, it's not about performance measurement.

Also, where the organization is going through a lot of change it's not always a good time to introduce 360. Maybe let the dust settle a little bit. Apart from anything else in changed organizations, often relationships that changed…they can be quite new and therefore there's not a lot of feedback that people can give as they haven't observed the participant long enough to be able to constructively score and comment.

How to choose your raters?

Once we've set all of the background, we’re into this piece, which is, how do you choose raters? Rater selection is a really important step. Most organizations will allow participants to nominate their raters…and some would prefer to have those agreed in advance (and what they give us is a template, like a spreadsheet, that has all the rater networks listed),

and we'll just upload that.

But importantly before that, whichever method is being used to get raters into the system, the concept of choice must be discussed. The two rules are:

#1 People that have observed you for long enough to be able to comment

#2 Are there people involved where the relationship isn't always good? In other words,

a balance of raters, people where you perhaps want to improve the quality of the relationship that you have with them. The 360 can be an amazing catalyst for that.

So…it's about getting a balance - not just your mates.

Contracting around confidentiality

This is very important because raters want to know that their feedback, whether it be scores or comments, is anonymized, you know, it gives them more protection. It gives them more freedom to be truthful. So we always talk about confidentiality and of course, unless you are an individual in a group like an only manager, then your feedback will be amalgamated with others and then averaged out. So anonymity is guaranteed.

Demo screens

We will usually show some of the tools, so in other words, the questionnaire interface,

some pages of the report, and so on…just to get people familiar with what's coming. And then we'll talk about this: the rater invitation conversation. And I want to bring up a slide specifically to cover that. The last thing we'll do, of course, is just open up the floor for questions, discussion, comments, etc…it's an interactive session. By the way, it typically takes an hour although it can be done in less. I mean, if time is at a huge premium, it's important to plan what you want to say very carefully and make sure that you can deliver it in that time frame. The other thing is, do record it because not everyone will turn up to the live session. So a recording makes it possible for everyone to get the same message.

Rater invitation conversations

So, let's go back to this item in the agenda here: the rater invitation conversation, I’m going to bring that up again...

What we want to do is give people (the participants) the opportunity to have a conversation with each rater. So this is each person that they want to provide them with feedback and we recommend this approach. It's a 10 step approach to improve greater engagement.

#1 This is to set the scene, the context. This is a conversation, by the way. I want to make this clear. There's not an email. The participant must find the time to pick up the phone,

or maybe organize a Zoom meeting (I mean, I've almost written off going to see people anymore because so many of us are working from home) but it's a conversation, it's verbal, and that first step is to set the context and also check out their 360 experience. Make sure that they're okay with doing a 360 on you.

#2 Explain what it is if they never heard of it and the process and so on.

#3 Specific, observable behaviours. I will highlight this, bring it out, because what we're really after in the 360 feedback is commentary. So in other words, qualitative comments that help bring scores to life. And that would be examples where the person has either done something or not done something, and/or said something, or not said something.

And that example SOB can be extremely helpful in providing depth and clarity and richness to the 360 reports. So we encourage participants to ask for those from each of the raters.

#5 Now when we talk about the time question, it's really about how long is it going to take? Everyone's going to want to ask that question. The answer is, it will depend obviously on the questionnaire: how many questions there are. But typically for our questionnaires,

the actual questionnaire piece can be as little as 10 to 12 minutes. The problem is that people want to write comments and that can take however long it takes, but I would suggest that there are another twenty minutes or so to add good quality comments.

Most systems - ours included – enables the user to actually leave and come back.

You know, they can save and exit and then come back in the same place.

#6 Confidentiality: raters are going to want assurance that their data will be anonymized.

#7 Describe the outcomes. interestingly, we recommend that the participant has follow-up conversations (once they've got their report) with individuals and this would be the time to check that that's okay. And that's really to get more clarity, more depth after they've seen their report so that they can build a much more robust and focused action plan.

#8 Then explain timings: when is it kicking off, how long is it open for. Check

first of all that the timing works but also that they have the capacity within that timeframe to do this because it is quite a gift that you're asking.

#9 And then ask for their commitment. Most people have their way of doing this.

I will typically ask: Are you up for it? Are you able to do that? Are you happy to do it?

And then #10…the last one is to thank them sincerely. I mentioned that this is a huge gift

people are giving you and most of us are pretty busy already. So, thank them sincerely and mean it.

And that's it.

I hope you can adopt this approach, the Awareness Briefing Approach? It'll improve the quantity of feedback you get but much more importantly, the quality of that feedback giving the individual participant a greater richness of data which will help them build a better development action plan. That's it for now.

Thanks very much.

Colin Newbold